CURVELET-BASED FREQUENCY-AWARE FEATURE ENHANCEMENT FOR DEEPFAKE DETECTION

Abstract

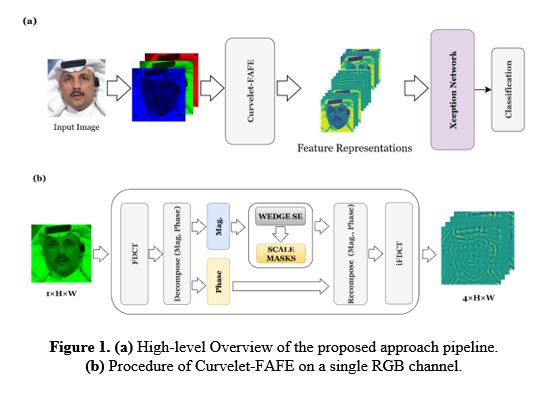

The proliferation of sophisticated generative models has significantly advanced the realism of synthetic facial content, known as deepfakes, raising serious concerns about digital trust. Although modern deep learning-based detectors perform well, many rely on spatial-domain features that degrade under compression. This limitation has prompted a shift toward integrating frequency-domain representations with deep learning to improve robustness. Prior research has explored frequency transforms such as Discrete Cosine Transform (DCT), Fast Fourier Transform (FFT), and Wavelet Transform, among others. However, to the best of our knowledge, the Curvelet Transform, despite its superior directional and multiscale properties, remains entirely unexplored in the context of deepfake detection. In this work, we introduce a novel Curvelet-based detection approach that enhances feature quality through wedge-level attention and scale-aware spatial masking, both trained to selectively emphasize discriminative frequency components. The refined frequency cues are reconstructed and passed to a modified pretrained Xception network for classification. Evaluated on two compression qualities in the challenging FaceForensics++ dataset, our method achieves 98.48% accuracy and 99.96% AUC on FF++ low compression, while maintaining strong performance under high compression, demonstrating the efficacy and interpretability of Curvelet-informed forgery detection

Full text article

References

. Deep Frequent Spatial Temporal Learning for Face Anti-Spoofing. http://arxiv.org/abs/2002.03723

Hu, J., Shen, L., & Sun, G. (2018). Squeeze-and-Excitation Networks. Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 7132–7141. https://doi.org/10.1109/CVPR.2018.00745

José De Carvalho, T., Riess, C., Angelopoulou, E., Pedrini, H., De, A., & Rocha, R. (2013). Exposing Digital Image Forgeries by Illumination Color Classification. IEEE Transactions on Information Forensics and Security, 8(7). https://doi.org/10.1109/TIFS.2013.2265677

Khalid, F., Javed, A., ain, Q. ul, Ilyas, H., & Irtaza, A. (2023). DFGNN: An interpretable and generalized graph neural network for deepfakes detection. Expert Systems with Applications, 222, 119843. https://doi.org/10.1016/J.ESWA.2023.119843

Khalifa, A. H., Zaher, N. A., Abdallah, A. S., & Fakhr, M. W. (2022). Convolutional Neural Network Based on Diverse Gabor Filters for Deepfake Recognition. IEEE Access, 10, 22678–22686. https://doi.org/10.1109/ACCESS.2022.3152029

King, D. E. (2009). Dlib-ml: A Machine Learning Toolkit. Journal of Machine Learning Research, 10, 1755–1758.

Kingma, D. P., & Ba, L. J. (2015). Adam: A Method for Stochastic Optimization.

Li, J., Xie, H., Li, J., Wang, Z., & Zhang, Y. (2021). Frequency-aware Discriminative Feature Learning Supervised by Single-Center Loss for Face Forgery Detection. Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 6454–6463. https://doi.org/10.1109/CVPR46437.2021.00639

Li, L., Bao, J., Zhang, T., Yang, H., Chen, D., Wen, F., & Guo, B. (2020). Face X-ray for more general face forgery detection. Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 5000–5009. https://doi.org/10.1109/CVPR42600.2020.00505

Luo, Y., Zhang, Y., Yan, J., & Liu, W. (2021). Generalizing Face Forgery Detection with High-frequency Features. Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 16312–16321. https://doi.org/10.1109/CVPR46437.2021.01605

MarekKowalski/FaceSwap: 3D face swapping implemented in Python. (2023). Retrieved August 15, 2025, from https://github.com/MarekKowalski/FaceSwap/

Masi, I., Killekar, A., Marian Mascarenhas, R., Pratik Gurudatt, S., & AbdAlmageed, W. (2020). Two-branch Recurrent Network for Isolating Deepfakes in Videos Demo of Our DeepFake Detection System Video Presentation.

Matern, F., Riess, C., & Stamminger, M. (2019). Exploiting visual artifacts to expose deepfakes and face manipulations. Proceedings - 2019 IEEE Winter Conference on Applications of Computer Vision Workshops, WACVW 2019, 83–92. https://doi.org/10.1109/WACVW.2019.00020

Mustak, M., Salminen, J., Mäntymäki, M., Rahman, A., & Dwivedi, Y. K. (2023). Deepfakes: Deceptions, mitigations, and opportunities. Journal of Business Research, 154, 113368. https://doi.org/10.1016/J.JBUSRES.2022.113368

Nayak, D. R., Dash, R., Majhi, B., & Prasad, V. (2017). Automated pathological brain detection system: A fast discrete curvelet transform and probabilistic neural network based approach. Expert Systems with Applications, 88, 152–164. https://doi.org/10.1016/J.ESWA.2017.06.038

Ojha, U., Li, Y., & Lee, Y. J. (2023). Towards Universal Fake Image Detectors that Generalize Across Generative Models. Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2023-June, 24480–24489. https://doi.org/10.1109/CVPR52729.2023.02345

Qian, Y., Yin, G., Sheng, L., Chen, Z., & Shao, J. (2020). Thinking in Frequency: Face Forgery Detection by Mining Frequency-Aware Clues. Lecture Notes in Computer Science (Including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), 12357 LNCS, 86–103. https://doi.org/10.1007/978-3-030-58610-2_6

Rössler, A., Cozzolino, D., Verdoliva, L., Riess, C., Thies, J., & Nießner, M. (2019). FaceForensics++: Learning to Detect Manipulated Facial Images.

Selvaraju, R. R., Cogswell, M., Das, A., Vedantam, R., Parikh, D., & Batra, D. (2016). Grad-CAM: Visual Explanations from Deep Networks via Gradient-based Localization. International Journal of Computer Vision, 128(2), 336–359. https://doi.org/10.1007/s11263-019-01228-7

Tan, C., Zhao, Y., Wei, S., Gu, G., Liu, P., & Wei, Y. (2024). Frequency-Aware Deepfake Detection: Improving Generalizability through Frequency Space Domain Learning. https://github.com/chuangchuangtan/FreqNet-

Thies, J., Zollhöfer, M., & Nießner, M. (2019). Deferred neural rendering: Image Synthesis using Neural Textures. ACM Transactions on Graphics, 38(4). https://doi.org/10.1145/3306346.3323035/SUPPL_FILE/ARTPS_139.MP4

Thies, J., Zollhofer, M., Stamminger, M., Theobalt, C., & Niebner, M. (2016). Face2Face: Real-Time Face Capture and Reenactment of RGB Videos. Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2016-December, 2387–2395. https://doi.org/10.1109/CVPR.2016.262

Wang, S. Y., Wang, O., Zhang, R., Owens, A., & Efros, A. A. (2020). CNN-Generated Images Are Surprisingly Easy to Spot. For Now. Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 8692–8701. https://doi.org/10.1109/CVPR42600.2020.00872

Wolter, M., Blanke, F., Heese, R., Garcke, J., Dembczynski, K., Devijver, E., & Wolter moritzwolter, M. (2022). Wavelet-packets for deepfake image analysis and detection Abbreviations CelebA Large-scale Celeb Faces Attributes CNN convolutional neural network DCT discrete cosine transform FFHQ Flickr Faces High Quality ff++ Face Forensics++. 111, 4295–4327. https://doi.org/10.1007/s10994-022-06225-5

Woo, S., Park, J., Lee, J. Y., & Kweon, I. S. (2018). CBAM: Convolutional block attention module. Lecture Notes in Computer Science (Including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), 11211 LNCS, 3–19. https://doi.org/10.1007/978-3-030-01234-2_1/TABLES/8

Yang, G., Wei, A., Fang, X., & Zhang, J. (2023). FDS_2D: rethinking magnitude-phase features for DeepFake detection. Multimedia Systems, 29(4), 2399–2413. https://doi.org/10.1007/S00530-023-01118-6/METRICS

Yu, Y., Ni, R., Li, W., & Zhao, Y. (2022). Detection of AI-Manipulated Fake Faces via Mining Generalized Features. ACM Transactions on Multimedia Computing, Communications, and Applications (TOMM), 18(4). https://doi.org/10.1145/3499026

Zhao, S., Iqbal, I., Yin, X., Zhang, T., Jia, M., & Chen, M. (2024). Seismic data denoising using curvelet transforms and fast non-local means. Petroleum Science and Technology, 42(5), 581–596. https://doi.org/10.1080/10916466.2022.2143799

Authors

Copyright (c) 2026 Salar Adel Sabri, and Ramadhan J. Mstafa

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Authors who publish with this journal agree to the following terms:

- Authors retain copyright and grant the journal right of first publication with the work simultaneously licensed under a Creative Commons Attribution License [CC BY-NC-SA 4.0] that allows others to share the work with an acknowledgment of the work's authorship and initial publication in this journal.

- Authors are able to enter into separate, additional contractual arrangements for the non-exclusive distribution of the journal's published version of the work, with an acknowledgment of its initial publication in this journal.

- Authors are permitted and encouraged to post their work online.